As an experiment, to harness the power of Claude Opus 4.6 (fast mode) preview to the max, I felt I should prototype a project I felt like making, over and over. Introducing Local AI Prompt, my first Visual Studio extension in years. The goal was simple, get access to my models on ollama, or LM Studio, through VS2026, as an agent.

Prototype Alpha 1: Start Communications

In around 5 minutes I put a set of instructions to build the extension from scratch through Copilot chat; then put it in the extension project’s readme.md. With only the readme, with a simple prompt I didn’t spend time making, the base project structure was created in 15 minutes.

please read through the steps in readme.md then follow the tutorial to make the extension for VS2026 for use of local AI models through ollama or LM Studio; if there are missing parts for the extension development for each step, please update the readme.md to also reflect what had to be done differentlyThen, with both ollama and LM Studio running, with the multiple models I swap between; it took just over an hour of adjustments, and fine tuning the project myself, and I got myself the vsix installer. The key notes, it takes decent prompt engineering to stop Claude Sonnet 4.6 to not get into infinite loops still on console commands, I’m sure the project so far is still a little too dirty to work with confortably.

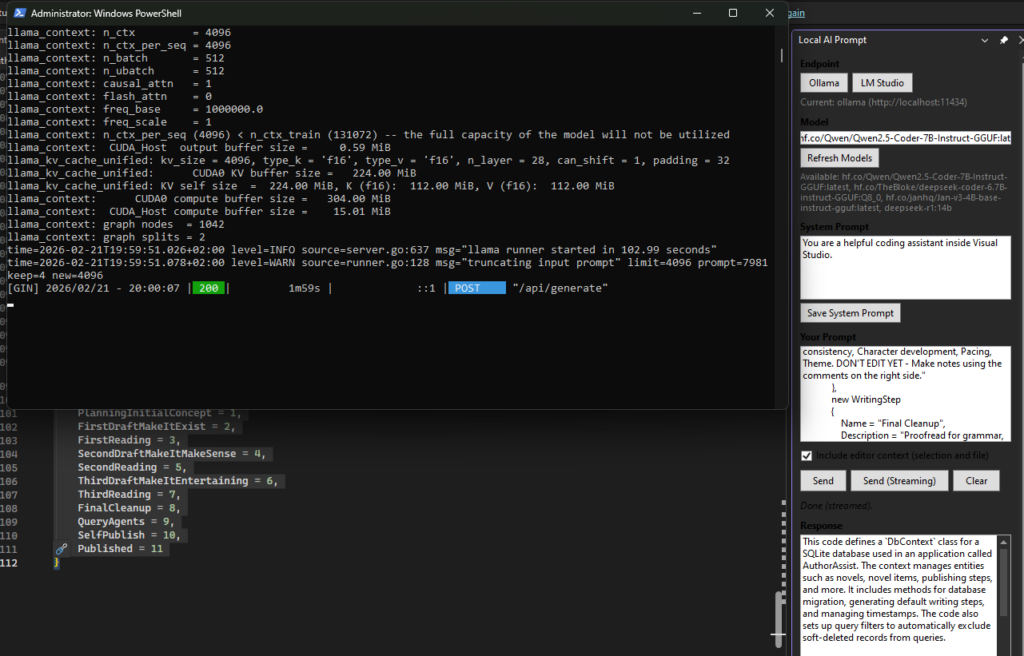

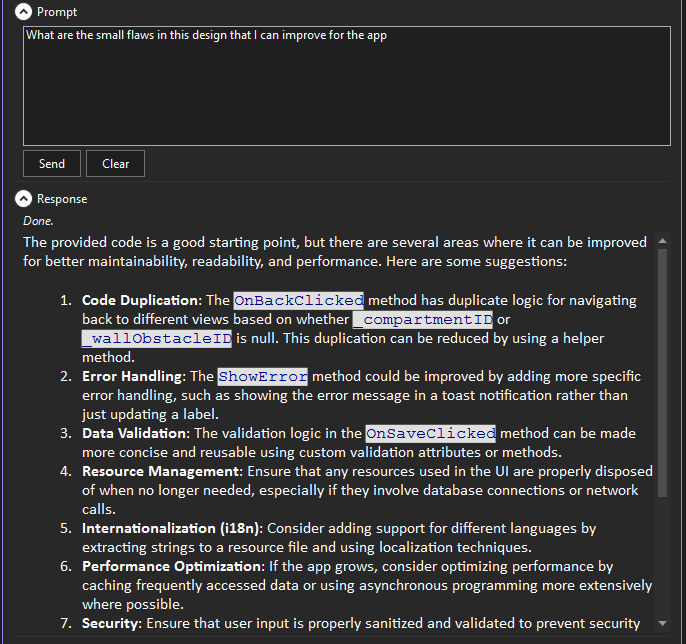

Then with a simple test prompt to review my data context class for Author Assist:

Please analyse the C# code and let me know if I didn't follow any correct practices and could improve it and the safety:

```csharp

<pasted DataContext.cs here>

```I got the response from ollama from the model hf.co/Qwen/Qwen2.5-Coder-7B-Instruct-GGUF:latest:

This code defines a `DbContext` class for a SQLite database used in an application called AuthorAssist. The context manages entities such as novels, novel items, publishing steps, and more. It includes methods for database migration, generating default writing steps, and managing timestamps. The code also sets up query filters to automatically exclude soft-deleted records from queries.

Key features include:

1. **Entity Classes**: Defines classes that map to tables in the database.

2. **DbContext Configuration**: Sets up relationships between entities and configures table names.

3. **Query Filters**: Adds a global filter to exclude deleted records from queries.

4. **Database Path Management**: Provides methods to determine the best database path based on available cloud storage options (OneDrive, Google Drive).

5. **Database Migration**: Includes a method to apply pending migrations to the database.

6. **Timestamp Management**: Automatically updates `EditedAt` timestamps for modified entities.

The code also includes an enum `WritingStepIDs` that defines constants for different writing steps. This helps in identifying and referencing specific steps within the application.

This setup is typical for applications that need a robust data model with support for querying, migration, and soft deletion of records. The use of enums for step IDs provides a clear and maintainable way to reference these entities throughout the codebase.Not overly impressive, yet, but a great base structure that at least works in VS2026. The models I experiment with regularly at present, offline, are:

# ollama list

hf.co/Qwen/Qwen2.5-Coder-7B-Instruct-GGUF:latest 6e6750388187 4.7 GB 2 weeks ago

hf.co/TheBloke/deepseek-coder-6.7B-instruct-GGUF:Q8_0 2d19b60e9e3c 7.2 GB 3 weeks ago

hf.co/janhq/Jan-v3-4B-base-instruct-gguf:latest fbac1a418ba1 2.7 GB 3 weeks ago

deepseek-r1:14b c333b7232bdb 9.0 GB 5 months ago

# LM Studio

qwen/qwen3-vl-8b

zai-org/glm-4.7-flash

qwen/qwen3-4b-2507

qwen/qwen3-vl-4b

qwen3-coder-30b-a3b-instruct-fp8

deepseek-r1-distill-qwen-14b

qwen/qwen2.5-coder-14b

edg3/lm_studio_mental_health_modelYeah, only 1 personal model in the listing, yes, not public (possibly “yet”), my training of C# specialist models and my own model for Godot development aren’t quite acceptable for use, for now. The aim is to understand that the goal was achieved: direct interaction with my local code models through VS2026!

Yes, at this stage a rough (bad) style with manual code movements; but the first goal achieved in under 2 hours, the core concept feels decent already. Access to ollama, or LM Studio, directly through VS.

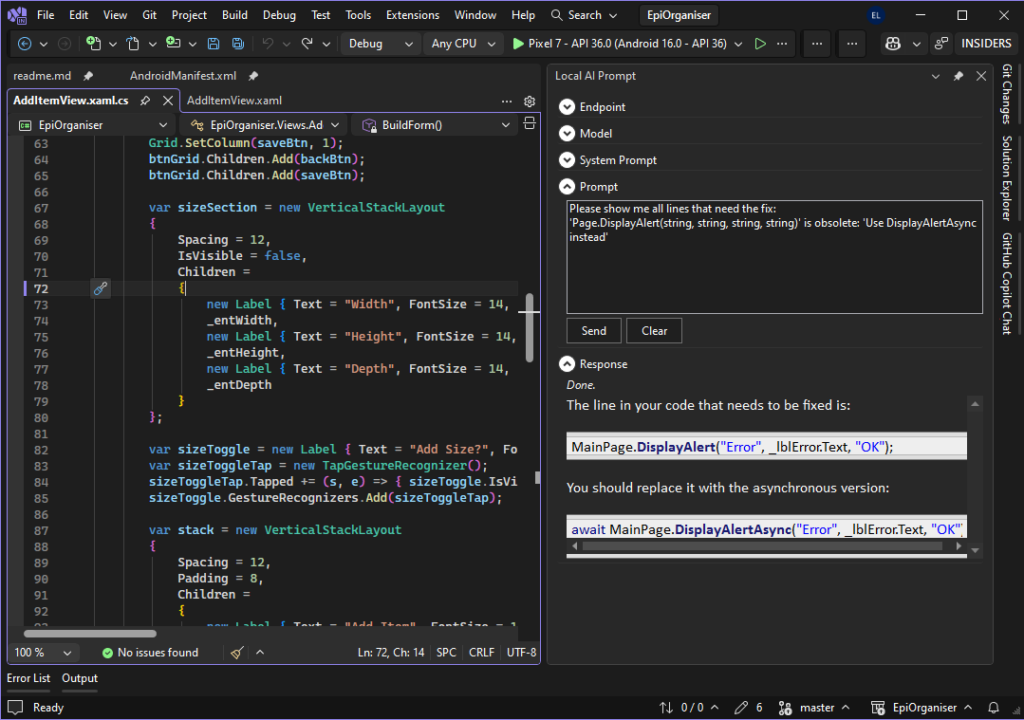

Prototype Alpha 2: Clean Up and Code Sampling

Next step, make it look better, and then testing of the context. Context hopefully covers opened file currently focused on, and selected code.

So, I cleaned it up, made it more minimalist; also added markdown highlights. The other bug is no context was coming through for the file that’s open, so I decided to make sure whichever file you focus on is given as context.

Yep, after a few hours of different models throwing out broken improvements with excessive hallucinations, I jumped back to the version at the end of Alpha 1, yes that commit, and rushed out the features 1-by-1 with way smaller context. The move to smaller context at least worked out to around 20 minutes. I was just trying to see how large the context could be as a whole, to be honest. Smaller context leads to faster implementation.

Conclusion for the Day

I’ll probably move through a few more adjustments for myself, such as context of multiple files. I don’t need my entire chat history as a reminder when I ask for a small quick change in any file, it leads to hallucinations. For instance, I used to use Visual Studio 2022. I’ve been using 2026 Insider preview, 2022 uninstalled, yet the online models still keep thinking I only use 2022. Annoying, but I can do my smaller context changes through my local models now, win-win.

I’d have to ask; might this extension be something I should release for people to also try out?

I’ve started to side towards my own privacy. Even with my linux distro, the fact I can’t build things like full MAUI apps, I’ve been convinced I need to consider distancing myself already. It was even mentioned that the AI slop is starting to cause trouble for open-source projects, so I’m more likely to move back to primarily C++ dev for my own projects, fairly soon. Heck, even revive my Vulkan prototype game engine initially wrapped in C#, which I abandoned a while ago, I’ll enjoy moving away from the excess slop, perhaps.